Better than the news

It sounds like a TED talk.

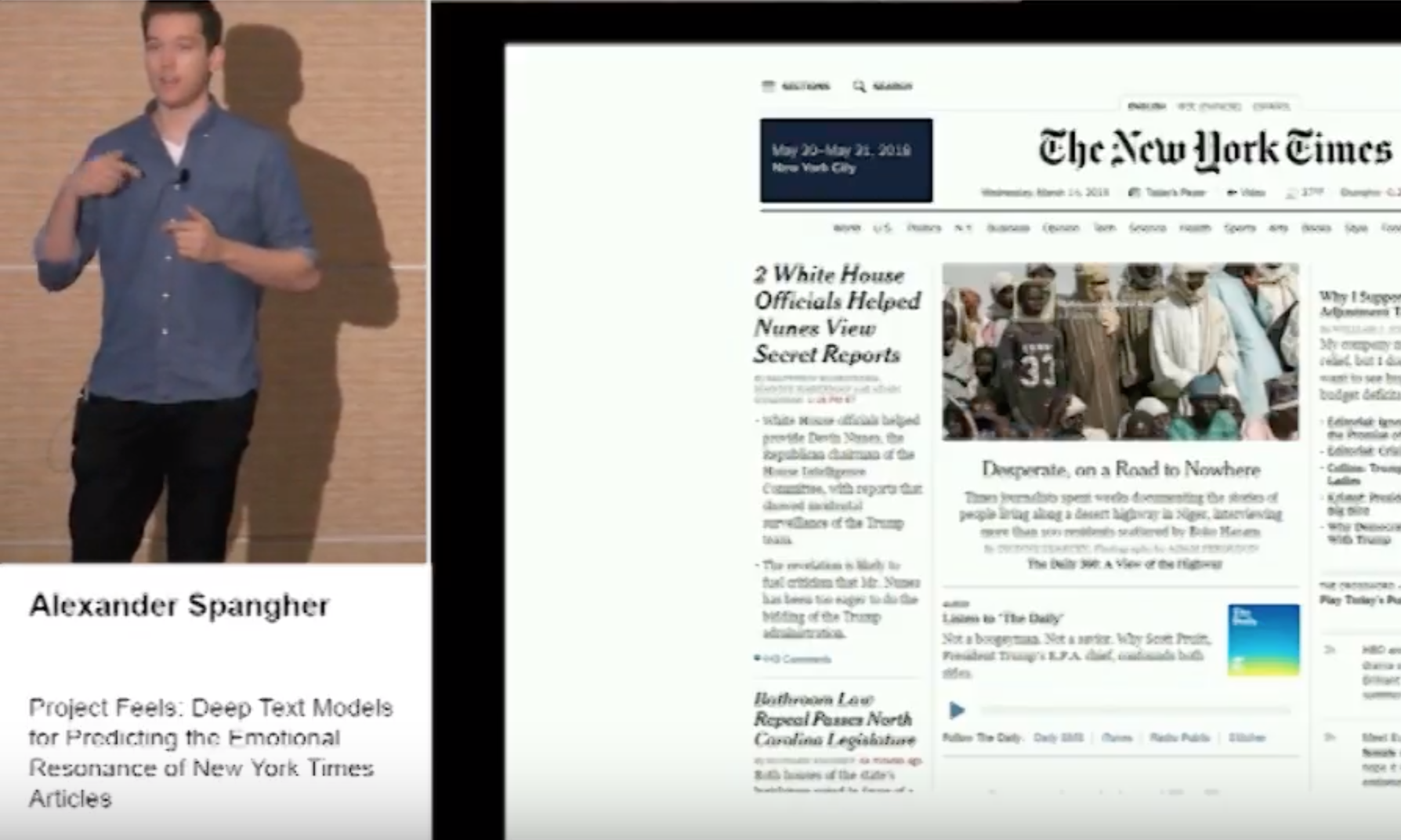

A data scientist from the New York Times takes the stage to share the project they have been working on:

“Project Feels”

When we look at the [NYTimes] homepage on any given day we notice a lot of stories there.

Is anyone feeling a bit tired these days?

What if we could predict the [emotional] effects that our articles would have on people?

We could recommend articles that make people feel a certain way

That last sentence is important.

Because feelings are habit-forming.

The speaker directly references it, with respect to identifying how to drive more New York Times subscribers.

Horror or Comedy?

In the short run, we’re masters of our own fates.

When you sit down and decide what screen to stare at for for this evening’s 30 minutes of free time, you are subconsciously asking yourself this question:

How do I want to feel?

Netflix, Amazon Prime, Hulu, and Facebook all provide this as a service.

The New York Times is too.

It’s important to remember that.

The Good Gal vs. The Bad Gal

News businesses write two or more versions of every article.

Both are factually true.

Both had a marginal cost of production that is falling to $0 through automation.

Should we care if it’s factually correct if it has been weaponized to try to evoke a certain emotion?

What was this New York Times frontpage trying to provoke in you?

The NYTimes doesn’t mind kneecapping Dems with unflattering photos if it drives shares/clicks/ad $ #capitalismbaby 🤘😝🤘 pic.twitter.com/LMBy08ozMl

— Max Mautner (@maxmautner) October 19, 2018</blockquote>

This weaponization may not even be consciously performed by the New York Times.

It could be that a third party chooses which emotion they want (or know you want) to feel.

That choice might be made for you by newsfeed curation algorithms like Facebook or Twitter.

Better than news(feeds)

Ways to avoid emotional manipulation via content:

- Spend time with your family

- Focus on your business, your job, your skills

- Track your personal financial portfolio (use an account aggregator like Personal Capital, or Google Sheets)

If I can summarize my advice in a single point:

Follow what the financial markets are doing, not what the media organizations are doing.

Stay healthy my friends.

Enjoyed this post? Get new posts via email